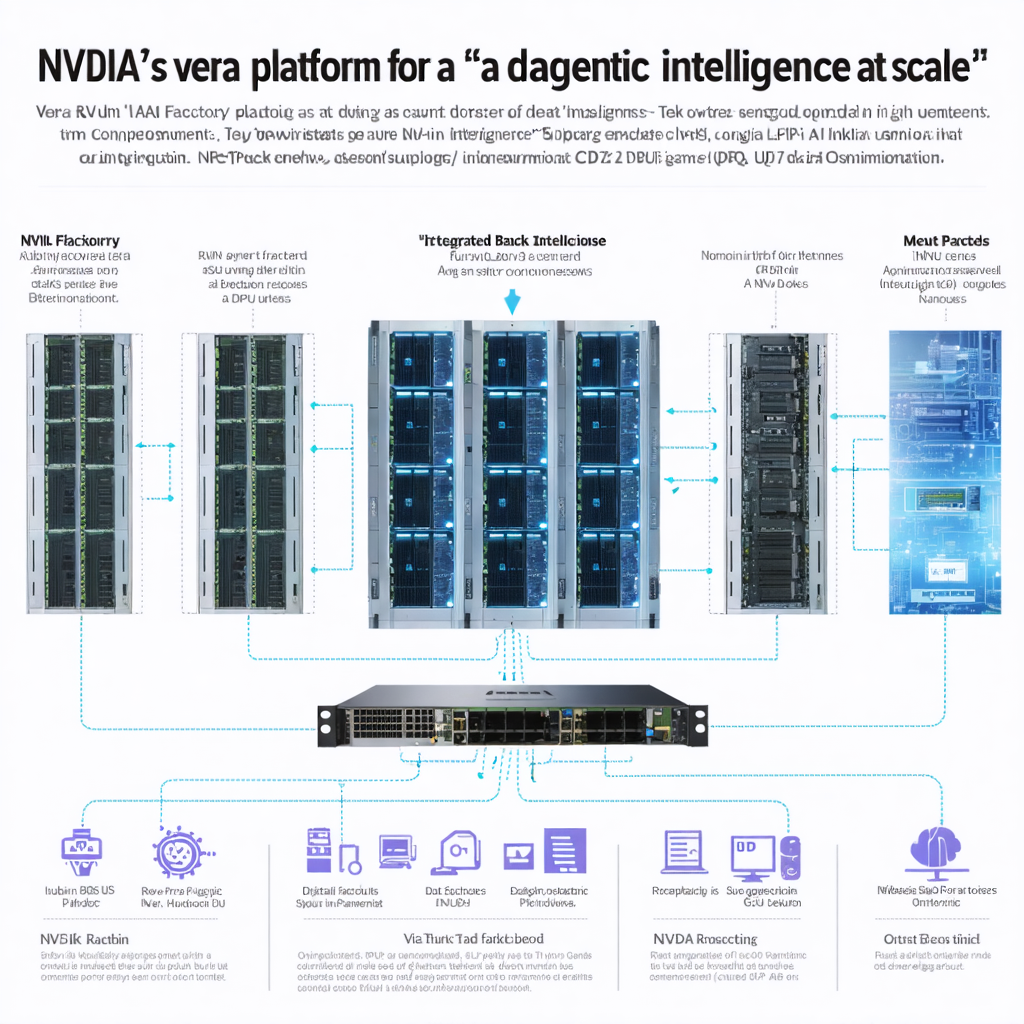

Agentic AI is pushing the industry beyond chatbots and toward systems that can perceive, reason, choose tools, and carry out multi-step tasks with limited supervision. NVIDIA’s answer is Vera Rubin, announced at GTC on March 16, 2026 as a platform designed for every major phase of AI work: pretraining, post-training, test-time scaling, and real-time agentic inference. Rather than introducing a single chip, NVIDIA presented an integrated stack that combines the Vera CPU, Rubin GPU, NVLink 6 Switch, ConnectX-9 SuperNIC, BlueField-4 DPU, Spectrum-6 Ethernet switch, and the newly added Groq 3 LPU. According to the company, these components are already in full production and can be deployed together as a POD-scale AI system. (investor.nvidia.com)

The flagship configuration is the Vera Rubin NVL72 rack, which links 72 Rubin GPUs with 36 Vera CPUs. NVIDIA claims that this design can train large mixture-of-experts models with roughly one-fourth the number of GPUs required by Blackwell, while delivering up to 10 times higher inference throughput per watt and one-tenth the cost per token. Just as important, the Vera CPU is not a conventional supporting processor: it was built specifically for AI factories, with 88 custom Olympus cores, up to 1.2 TB/s of memory bandwidth, and 1.8 TB/s of coherent CPU-GPU bandwidth through NVLink-C2C. In other words, Vera Rubin is meant to remove the hidden bottlenecks that appear when vast numbers of models and agents must run continuously at scale. (investor.nvidia.com)

What makes the story especially interesting is NVIDIA’s broader vision. The company increasingly describes data centers as “AI factories” that turn power, silicon, and data into “intelligence tokens.” To support that idea, it has also released a Vera Rubin DSX reference design and an Omniverse DSX blueprint so operators can build digital twins of future AI factories, simulate power and cooling, and optimize performance before construction begins. Major partners are already involved, and NVIDIA has said AWS, Google Cloud, Microsoft Azure, and Oracle Cloud will be among the first cloud providers to deploy Vera Rubin-based instances. (investor.nvidia.com)

For learners of English, Vera Rubin is a useful case study in the language of technological inflection points. The key word is not merely “faster,” but “orchestrated”: agentic AI requires memory, networking, storage, security, and inference to work as a coordinated whole. If NVIDIA’s roadmap holds, Vera Rubin may matter not only because it is powerful, but because it reframes AI infrastructure as an industrial system for autonomous digital work. NVIDIA and OpenAI have even said the first gigawatt of their next deployment phase is planned for the second half of 2026 on the Vera Rubin platform, suggesting that this architecture is meant to move quickly from announcement to real-world scale. (investor.nvidia.com)